technical: Lost in Translation 3 - Converting Between Code Pages

In the first article in this series, we introduced the different z/OS code pages,

including EBCDIC and Unicode. In the second, we talked about using ASCII,

Unicode and EBCDIC on z/OS. But how do you convert between them?

Translation in Transmission

Translation between code pages is always an issue when we transfer files to and from the mainframe. Because of this, all file transfer products offer

some translation features. Older style file transmission products such as Barr/RJE and Axway CFT offer a way to create custom

translation tables, and possible choose between them for each individual transfer. This works well, but often means that someone has to manually

enter or generate a translation table. Basic translation tables to convert between ISO8859-1 ASCII and EBCDIC-037 are usually included.

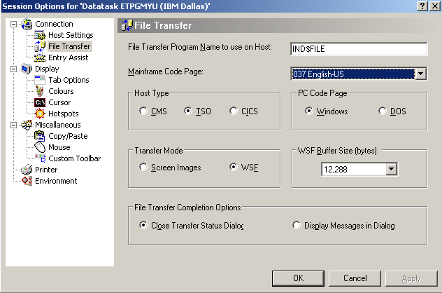

Today's 3270 emulation software provide similar functionality without the need to manually create a translation table. These software products use the

TSO/E IND$FILE utility that comes free with TSO/E, and usually provide a drop-down option to specify the mainframe code page. Here's an example from Giant

Software's QWS3270.

However, they only provide basic translation between ASCII and EBCDIC.

The z/OS FTP server offers a lot more options. Text files are automatically translated between ASCII and the default EBCDIC code page when sent between

z/OS and an ASCII-based platform such as Windows. But this is just the beginning. The z/OS FTP SBDataconn (or sbd) site command can be used to specify the

source and destination codepages. Look at the following command issued from the sender thats sets the source code page as EBCDIC-273 (German) and the

destination to our old favourite EBCDIC-037:

quote site sbd=(IBM-273,IBM-037)

Now, any FTP put or get command will translate the file between these two code pages. Because this command is unique to the z/OS FTP

server, we use the quote command to send the site command to the z/OS. Although we've specified source and destination code pages, we could also have entered

a z/OS dataset or USS file with a custom translate table. In this case, the above command would become something like

quote site sbdataconn=tcpip.transtbl1

The MBDataconn (or mbd) site command is the same, but works for multibyte code pages such as UTF-8. But before we can use it, z/OS FTP must be set into multibyte mode

using the site encoding command like this:

quote site encoding=mbcs

quote site mbd=(UTF-8,IBM-037)

The encoding command is not needed if the encoding mbcs parameter is specified in the z/OS FTP.DATA parameter file.

The z/OS FTP Server has another way for UCS-2 (or UTF-16) translations:

quote type u 2

quote site noucstrunc noucssub ucshostcs=ibm-037

The type u 2 command sets the source type as UTF-16. The ucshostcs command sets the destination code page.

TCP/IP (and TCP/IP applications such as FTP) can also use custom translation tables. These tables are normally in the TCPXLBIN dataset, and generated using

the CONVXLATE command supplied with TCP/IP. FTP transfers can also specify a specific translation dataset created with CONVXLATE.

Network Translation

File transfers are far from the only data translation necessary. In fact any network traffic between z/OS and another platform may need to be translated.

The brunt of this work is done by TCP/IP. For example, TCP/IP must translate data between z/OS and a Windows based Telnet client. Translation also occurs for

the many TCP/IP applications such as SMTP, NFS and SMB.

In the past, TCP/IP used translation tables in the TCPXLBIN dataset to specify all translation. Today Unicode Services is a much better option. We'll talk

about Unicode Services in a moment. But TCP/IP doesn't do all the work. Let's take CICS Web services as an example. To display web pages or return XML style

output, it must translate legacy data and application from EBCDIC to UTF-8 or something similar.

CICS automatically performs this translation, using the default EBCDIC code page specified in the LOCALCCSID SIT parameter. It also will read and use any

overriding Content-Type or Accept-Charset headers from Web clients that request different code pages. In older releases, CICS used the DFHCNV table for code

conversion. Today, CICS uses Unicode Services. CICS also provides Unicode Services style data conversions with commands to put and get data from its container

resources.

IMS is also well into this area. IMS Connect is the weapon of choice; however its features are more basic. IMS Connect message exits perform all incoming

and outgoing message translation, including encryption and compression if needed. IMS Connect clients can specify which message exit to use.

Websphere MQ also provides automatic translation. Queue managers have a default CCSID, and applications can specify the CCSID of message data when putting

messages onto the queue. Applications retrieving messages can choose whether the messages are translated or not.

Unicode Services

In the past any data transmission software needed to do its own data translation. These products provided their own translation tables, or forced users to

create them. Today, this can all be done using z/OS Unicode Services, a free component of z/OS. Unicode Services comes complete with many standard conversion

tables, so there's a good chance you won't need to create your own.

Although it sounds like Unicode Services is only for Unicode, it actually provides services for translation between any defined code pages: EBCDIC, ASCII

and Unicode. It can also perform case conversion, normalisation, collation and stringprep conversion for Unicode strings.

Conversion tables are loaded by Unicode services - either on startup from a list in the CUNUNIxx parmlib member, or automatically the first time it is

requested. Applications can use Unicode Services by using:

- The Unicode Services stub routines from Assembler, C or REXX.

- The C/C++ iconv() family of functions.

- The COBOL DISPLAY_OF (to convert to any CCSID) and NATIONAL_OF (to convert to UTF-16) functions.

- The PL/1 memconvert function.

Unicode services is used by many systems, including TCP/IP and its applications such as SMTP, FTP and NFS.

Converting Files

So far, we've only looked at converting network traffic. But what if you have an existing file to convert?

The easiest option is to use the USS iconv command, which also hops on the Unicode Services bandwagon. To convert a USS file from ASCII to EBCDIC, the USS

command is:

iconv -f iso8859-1 -t IBM-1047 source.txt > dest.txt

The -f switch specifies the source code page, -t the destination. By default, iconv sends output to the terminal, so we've piped the output to the file

dest.txt. iso8859-1 is the standard ASCII code page. Iconv handles both single-byte and multi-byte codepages with ease.

iconv can also be called from batch using the Language Environment EDCICONV utility:

//ICONV EXEC PGM=EDCICONV,

// PARM=('FROMCODE(UTF-8),TOCODE(IBM-037)')

//STEPLIB DD DISP=SHR,DSN=CEE.SCEERUN

//SYSUT1 DD DISP=SHR,DSN=DAVIDS.INPUT.DSET

//SYSUT2 DD DISP=SHR,DSN=DAVIDS.OUTPUT.DSET

//SYSPRINT DD SYSOUT=*

//SYSIN DD DUMMY

In the above example, we're converting a multibyte codepage to single-byte, so the record lengths of the input and output datasets will be different. It's

a good idea to use RECFM=VB with a nice big record length to make sure there is enough space. Iconv can also be called as a TSO/E command using the Language

Environment ICONV CLIST:

ICONV 'MY.SOURCE.FILE' 'MY.CONVERTD.FILE'

FROMCODE(IBM-037) TOCODE(IBM-1047)

Although iconv is the most common conversion utility, it isn't the only choice. The USS pax command can create archive files intended for platforms using

other code pages. For example, the command

pax -wf tpgm.pax-o from=IBM-1047,to=ISO8859-1 /tmp/my/dir

backs up the directory /tmp/my/dir which is in EBCDIC (IBM-1047) into an archive file that will automatically be restored in ASCII (ISO8859-1).

Similarly an ASCII pax file can be extracted as EBCDIC using the command:

pax -o to=IBM-1047,from=ISO8859-1 -r < infile.tar

Recent modifications to DFSORT have added the TRAN=ETOA and TRAN=ATOE statements, allowing data, and even individual fields, to be converted between ASCII and

EBCDIC. These statements are available from z/OS 1.13, or previous z/OS releases with PTF UK90025.

Roundup

The amount of communication between z/OS and other platforms is rapidly increasing. What's more, many companies are centralising their z/OS applications, so

that one application may work with users in different geographical areas, using different languages. Because of this, understanding and working with different

code pages becomes more and more important. In this series of three articles, we've looked at the different code pages, how data can be stored in each of them,

and how to convert between them.

David Stephens

|