technical: In-Order Delivery, MQ and Queue Sharing Groups

Here's a fact: IBM MQ doesn't always guarantee in-order delivery. So, if one application PUTs five messages on a queue, another may not GET these in the order they were PUT. So how can we guarantee in-order delivery? And how does a queue sharing group (QSG) affect this?

We faced these exact questions in a project we're still working on with our partners, CPT Global. Here's the answers we found, and the problems we unearthed.

The Project

Over the past 18 months, we've been working on a project to move applications to Sysplex: active-active. As part of this project, we needed to implement an IBM MQ queue sharing group (QSG) servicing CICS regions.

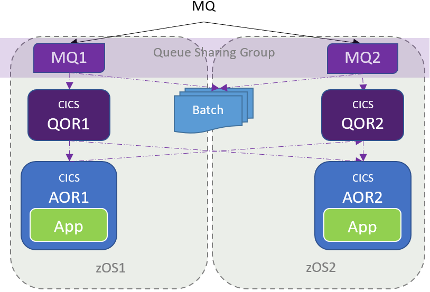

Our final configuration looked something like this:

Two queue managers (MQ1 and MQ2) on two z/OS systems in the same MQ queue sharing group. Triggering via queue-owning CICS regions (QOR1 and QOR2), routing to the application in the application owning CICS regions (AOR1 and AOR2). Or in other words, a CICSPlex.

So, how did our applications ensure in-order processing?

One Task

To perform in-order processing, only one task can open an incoming queue at a time. Before QSG, our applications achieved this in two ways:

- CICS transaction classes: each transaction belonged to a transaction class with a maximum task value of 1: only one transaction can run at a time.

- ENQ: Transactions would perform an EXEC CICS ENQ before opening the MQ queue. If the ENQ failed, another transaction was doing the job, and the first transaction ended.

Another option could have been to open the queue for exclusive use.

With our QSG and CICSPlex, this had to change:

- CICS transaction classes didn't work. Transaction classes only work at the CICS level. With a CICSPlex, we have two (or more) CICS regions that could possibly open a queue. Even with transaction classes, two tasks (one in each CICS region) could open the queue. The solution was to change to use CICS ENQs. Which brings us to ...

- ENQ: CICS ENQs also work at the CICS level. To get serialization in a CICSPlex, we needed to change these ENQs to CICS global ENQs: applicable to the entire sysplex.

Again, opening the MQ queue with exclusive access would also have worked here.

Framework

Suppose our queue fills up. Incoming messages will now be put on the dead-letter queue. When the original queue is freed up, more incoming messages are put on the queue, and processed. However, our messages on the dead-letter queue aren't: we've lost our in-order processing.

This is one of a couple of scenarios where MQ can lose in-order delivery. And there are no easy solutions. Applications need to add processing to check for in-order processing, and handle things when the messages are out of order.

Our customer solved this by developing their own MQ framework: a CICS-based application to handle these situations. Only used for some queue, this removed the need for applications to do this work themselves.

With a QSG, we needed to make some changes to this framework. In particular, we had to make this CICS application 'CICSPlex'.

After QSG: The Glitch

Before CICSPlex, there was only one CICS region that could open a queue. After CICSPlex, there were two CICS regions: one on each z/OS system. So, consider this situation.

Suppose we have an application on AOR2 that is accepting messages from a queue. It is reading multiple messages for each unit of work: say one syncpoint every 100 messages read (good for performance). Suppose that midway though a unit of work, zOS1 crashes hard.

In a QSG, MQ is pretty good. The surviving MQ queue manager (MQ2) will realise that things aren't great, and backout any GETs from AOR1 that were uncommitted: any GETs between the last syncpoint, and when zOS1 crashed. Now AOR2 can open the queue, and continue to process messages in-order. In our site, we tested this, and it was successful. So far, so good.

Suppose this zOS1 crash happened in the middle of a two-phase commit. Let's spell it out:

- Our program in AOR2 does a SYNCPOINT request.

- AOR1 notifies MQ1 to prepare to commit.

- zOS1 crashes.

Now MQ has a problem. MQ2 will realise that zOS1 has crashed, and attempt to backout any uncommitted changes. However, it's not sure what to do. We are in the middle of a two-phase commit, so it can't backout changes, nor can it commit them. It's stuck.

Now AOR2 opens the queue, and begins processing. It will skip over the uncommitted messages. When AOR1 recovers, it will tell MQ1 to backout the in-doubt GETs. These messages will be 'replaced' on the queue ready for processing: but after a bunch of other messages. We've lost our order.

A Solution To The Glitch

So, what's our solution? MQCONNX. Options: connection tag name = queue name + SND or RCV. MQCNO-OPTIONS = MQCNO-SERIALIZE-CONN-TAG-QSG.

Let's go through this a bit slower. Use define a connection tag made up of the queue name, plus characters to identify the send or receive end. As we have multiple tasks opening multiple queues at the same time, we need a connection tag that we can identify with a queue. As another CICS within the QSG may also open this queue at the other end, we use the SND or RCV characters so they can't get in each other's way.

All applications that GET messages and require in-order delivery specify an MQCONNX MQI call before opening the queue. So, what does this give us? Let's step through things again:

- Our program in AOR1 (PGM1) does our MQCONNX, MQOPEN.

- PGM1 begins GETting messages.

- PGM1 does a SYNCPOINT request.

- AOR1 notifies MQ1 to prepare to commit.

- zOS1 crashes.

- A program on AOR2 (PGM2) is triggered.

- PGM2 does an MQCONNX and fails.

So, how does this help? Because of our MQCONNX command and options, when PGM2 tries to do an MQCONNX, it fails as there are uncommitted operations on the queue. AOR2 cannot open our queue.

I know what you're thinking: "you don't code MQCONN or MQCONNX when running in CICS." And you're right: you don't have to. But if you want to specify connection options, you must code MQCONNX. Don't forget to code MQDISC at the end of your program if you do.

Discussion

By now, you're probably wondering 'how does this solve the problem?' Well, we've protected our in-order processing by stopping AOR2 from processing the queue. But at a cost: the queue is frozen. We need to restart AOR1 so it can 'settle-up' with the QSG, and backout uncommitted operations. And we need to do this fast. We're working to find out ways to quickly restart AOR1 on any available z/OS system to release locks, and get things back running.

It's not only our application. That MQ framework that was coded also needed to add connection tag processing to ensure in-order delivery.

It doesn't sound pretty does it? Either we lose in-order processing, or our processing stalls. And this is the question our applications have asked themselves: which is more important? Some have decided that they can live with some messages processed out of order to eliminate the chance of processing stopping. Others haven't, and used the connection tag solution above.

Conclusion

MQ doesn't always guarantee in-order delivery. If a program really needs things in-order, it needs to do some processing to make it happen. Even then, a QSG can cause problems with this, as a second process (CICS region) can now compete for our queue. We've developed a solution with connection options to address one of these issues.

David Stephens

|